On 20 January 2026, the House of Commons Treasury Committee published its report into artificial intelligence in financial services and reached a conclusion that was direct even by parliamentary standards. The Bank of England, the FCA, and HM Treasury were, it said, exposing consumers and the financial system to potentially serious harm. Not through excessive regulation. Through insufficient attention.

The specific charge was a "wait-and-see" approach. The Committee concluded that regulators were watching AI deploy across UK financial services without adequate frameworks for what happens when it goes wrong.

The Scale of Deployment

More than 75 per cent of UK financial services firms are now using AI, according to findings cited in the Treasury Committee report, with the highest take-up among insurers and international banks. The applications range from fraud detection and credit scoring to customer-facing services and trading decisions.

The pace of deployment has outrun the pace of regulatory clarity. The FCA's current position is that existing frameworks are sufficient and that no AI-specific rulebook is required. The Treasury Committee's position is that this approach is inadequate.

What the Committee Identified

The risks the Committee highlighted are specific and structural. AI-driven decisions in credit and insurance may lack the transparency required for consumers to understand or challenge them. AI-enabled fraud is growing in scale and sophistication. Algorithmic systems making similar decisions simultaneously create conditions for herding behaviour in markets. And the concentration of AI and cloud infrastructure in a small number of providers creates a systemic dependency that no single regulated firm controls.

Running through all of these is a common thread: diffused accountability. When a consumer is denied credit, or misled by an AI-driven financial tool, or caught in a fraud that an AI system failed to prevent, the question of who bears responsibility becomes genuinely difficult to answer.

The Mills Review

One week after the Treasury Committee report, on 27 January 2026, the FCA launched the Mills Review, a long-term examination of how AI will reshape retail financial services by 2030. Led by Executive Director Sheldon Mills, the review is looking beyond current applications to examine how increasingly interconnected AI systems might change market structure, consumer behaviour, and the regulatory approaches needed to govern them.

The FCA's own framing was candid. It noted that it does not yet know which risks will matter most, or which mitigations will actually work. For a regulator whose position is that existing frameworks are sufficient, that acknowledgement carries some weight.

The Accountability Question

The Treasury Committee's most pointed recommendation was not about stress-testing or technical standards. It was about accountability. The FCA should, the Committee said, publish guidance making clear who in regulated organisations is responsible for harm caused through the use of AI, and what level of assurance senior managers are expected to provide.

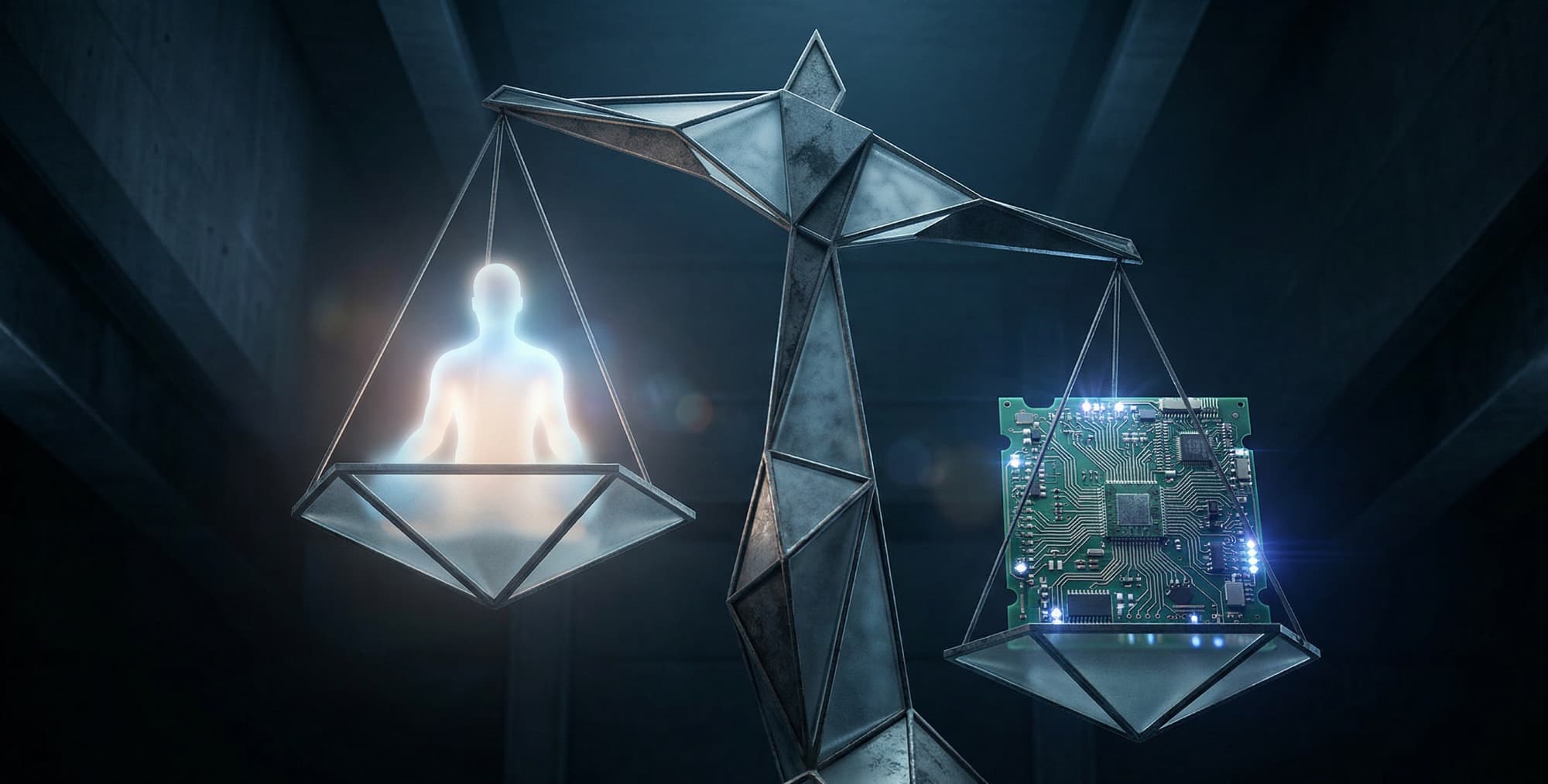

That recommendation identifies something the current framework has not resolved. An AI system that makes a credit decision is not a person. It cannot be called before a committee. It cannot hold a senior manager function. But the harm it causes is real, and someone must answer for it.

Opinion: The Architecture of Answerability

The Treasury Committee's report is significant not only for what it recommends but for what it reveals. The deployment of AI across UK financial services has proceeded faster than the institutional capacity to assign responsibility for what it does. That gap is not a regulatory failure in the conventional sense. It is an architectural one.

Systems that make consequential decisions about people's financial lives were designed to be efficient. Accountability was not built in as a structural property. It was assumed to follow from the fact that a regulated firm deployed the system. The Treasury Committee's concern is that this assumption is increasingly difficult to sustain as the AI's role in the decision grows and the human's role diminishes.

The question the Mills Review will have to answer is not whether AI can be made accountable through guidance and governance. It is whether accountability can survive being delegated to a model at all. The firms that answer that question well are not those that produce the clearest audit trail after the fact. They are those that constrain what the model can decide in the first place.

Sources

Artificial intelligence in financial services — Treasury Committee report — House of Commons Treasury Committee, 20 January 2026

Mills Review into the long-term impact of AI on retail financial services — FCA, 27 January 2026